News

COMMUNICATIVE AI

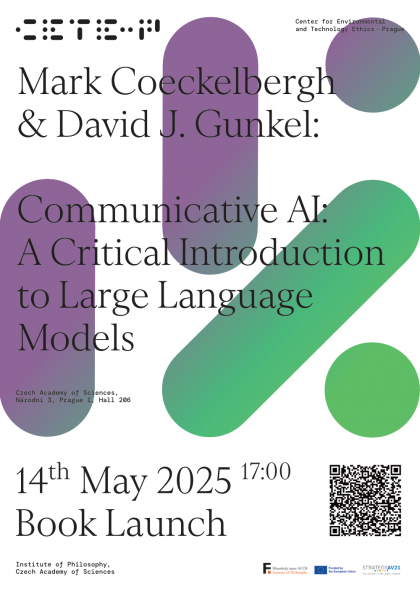

A book launch

On Wednesday, May 14, the world premiere of David Gunkel and Mark Coeckelbergh's new book COMMUNICATIVE AI: A CRITICAL INTRODUCTION TO LARGE LANGUAGE MODELS (Polity 2025) will take place in the main building of the Academy of Sciences CAS at Národní 3. As those interested in the Karel Čapek Center probably know, Mark Coeckelbergh is our external member, and also a member of the Center for Environmental and Technological Ethics (CETE-P) at the Institute of Philosophy CAS. David Gunkel is an American philosopher, known for many monographs on topics from the philosophy of robotics and AI (The Machine Question, Robot Rights, Person Thing Robot, etc.).

The event is open to the general public and all interested parties are welcome!

Events

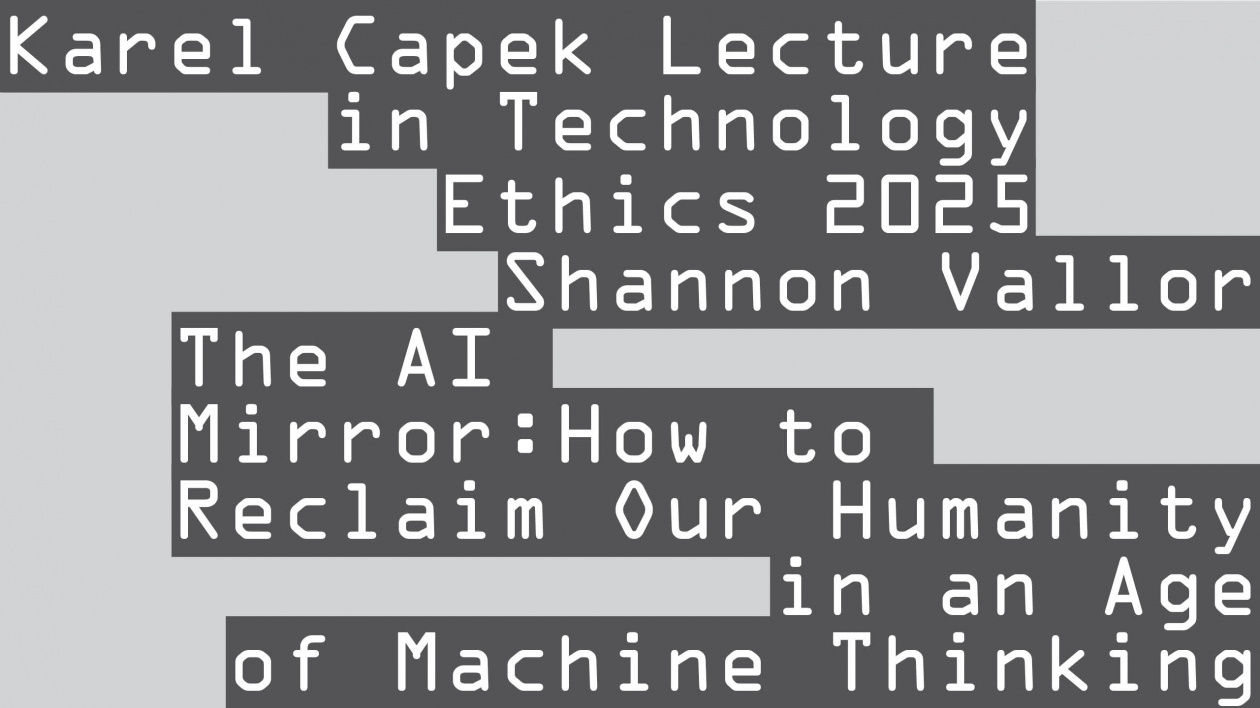

The AI Mirror: How to Reclaim Our Humanity in an Age of Machine Thinking

KAREL ČAPEK LECTURE IN TECHNOLOGY ETHICS 1

16. 9. 2025

16 September 2025, 4pm-6pm

Edison Filmhub, Jeruzalémská 1321/2, Praha 1

Free entry

Cookies